The $700 Billion AI Race No One Can Afford To Lose. Or Win.

- 28 min read

- 1,008

- Published 15 May 2026

In the Netflix series Squid Game, hundreds of debt-ridden contestants volunteer for a series of children's games with a deadly twist. Most know they will probably die. But they stay anyway, because returning to their crushing debts feels worse than a slim chance at survival inside the arena. The twist? Even the winners leave damaged.

In 2026, the world's largest technology companies are playing their own version of Squid Game. Except the arena is artificial intelligence, the entry fee is measured in lakhs of crores of rupees, and the prize (if it exists at all) remains maddeningly unclear.

This race began in earnest on 30 November 2022.

That was the day a then-obscure American research lab called OpenAI released ChatGPT: the first artificial intelligence tool that could draft an email, write working computer code, explain a contract or hold a research-grade conversation, all at roughly the level of a competent junior employee. ChatGPT crossed ten lakh users in five days and ten crore users in two months, the fastest consumer technology adoption ever recorded.

Within weeks, Microsoft committed roughly $10 billion to OpenAI. Google declared an internal Code Red, the company's highest-level crisis designation. Meta quietly abandoned its metaverse pivot. Anthropic, a rival lab founded by former OpenAI researchers, raised billions to build Claude, a competing AI assistant now used by major banks, hospitals and law firms worldwide. And every large technology company concluded the same thing: be in this race, or be irrelevant within five years.

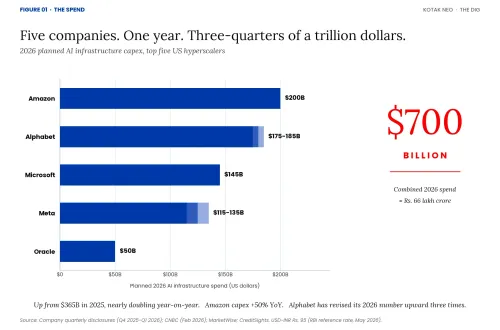

Amazon will spend $200 billion on AI infrastructure this year: the data centres, specialised computer chips and electrical capacity AI requires to run at scale. Alphabet has committed $175 to $185 billion. Microsoft, $145 billion. Meta, between $115 and $135 billion. Oracle, $50 billion. Combined, these five companies (known in the industry as the hyperscalers, because they operate cloud computing at a scale no smaller firm can match) are pouring nearly $700 billion, roughly ₹66 lakh crore at an exchange rate of ₹95 to the dollar, into a single year of AI infrastructure.

Figure 01 · The Spend

That is nearly double the $365 billion these same companies spent in 2025. Amazon's capex is up 50% year-on-year. Alphabet has revised its number upward three times, from an initial $71 to $73 billion to as much as $185 billion. The bill is crushing free cash flows. Amazon's could go negative in 2026. Alphabet's could plummet 90%. And the companies are issuing unprecedented levels of debt to keep up.

The four largest hyperscalers raised $108 billion in debt during 2025 alone, with $1.5 trillion in total debt issuance projected over the coming years. Oracle, facing $156 billion in infrastructure commitments, raised $50 billion in new debt and equity, lost its credit outlook from Moody's (a global credit rating agency), and laid off up to 30,000 employees to generate $8 to $10 billion in additional free cash flow.

And yet, for most of 2024 and 2025, markets cheered. Meta's stock surged 11% and Microsoft's jumped 4% the moment they announced larger spending plans. Investors were not rewarding profits. They were rewarding commitment to the race.

These companies cannot stop. Not because the payoff is guaranteed (it is not). Not because all investors are comfortable with the scale (many are not). They cannot stop because sitting this one out guarantees something worse: watching rivals pull ahead while you explain to investors why you chose to be the next Nokia.

Welcome to the AI prisoner's dilemma: the rational choice for each player produces the worst outcome for everyone.

Why This Technology Leap Is Creating The Prisoner's Dilemma

What transforms AI from a normal technology cycle into an inescapable prisoner's dilemma? Time. Or rather, the complete absence of it.

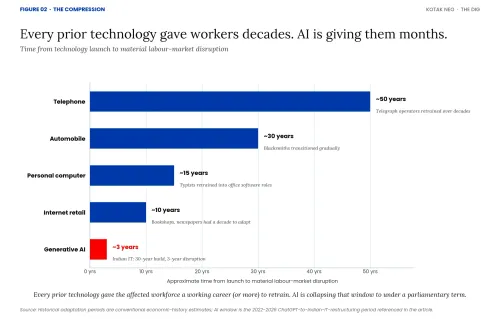

When the telephone was invented, people asked: how will this speed up communication? Could this save lives in emergencies? Those questions assumed agency because there was time to find answers. The telephone took over fifty years to reach market saturation. Telegraph operators did not lose their jobs overnight. They had decades to retrain, to move into new roles that the telephone itself created.

When the automobile arrived, horses were not replaced in a year. Blacksmiths did not wake up one Monday to find their entire profession obsolete. The transition took decades. There was time to build petrol pumps, train mechanics, establish driving schools. An entire ecosystem evolved gradually.

When the internet emerged in the 1990s, retail did not collapse in 1995 when Amazon launched. It transformed gradually. Bookshops had time to adapt. Newspapers had a decade to experiment with digital models before the revenue crash came.

Now, with AI, the dominant question is different. Will this take my job?

The shift is not just psychological. It is temporal. GPT-3 launched in June 2020. GPT-4 in March 2023, less than three years later, with capabilities that felt like a generational leap. Claude 3 arrived in March 2024. Multimodal models that can see, hear and reason appeared within months. AI coding assistants went from experimental to production-ready in roughly eighteen months.

Indian IT companies spent thirty years building a $250 billion export industry. That industry is now being fundamentally disrupted in under three years. There is no time to adapt. A telegraph operator in 1880 had an entire career to transition. A bookshop owner in 2000 had a decade. A junior engineer laid off in 2026 has, perhaps, eighteen months before the next wave of automation hits.

Figure 02 · The Compression

The companies driving this acceleration know they are moving too fast. But they cannot slow down. In a race moving this fast, slowing down, even slightly, means you have already lost.

This is what turns the race into a prisoner's dilemma. In every previous technology cycle, the option to wait existed. You could let your rivals spend first, watch which bets paid off, and follow the winners at a fraction of the cost. The fast follower often beat the pioneer. Facebook beat MySpace. Google beat Yahoo. iPhone beat BlackBerry. None of those winners moved first. They moved second, with better information. AI has closed that door. The leader who builds the dominant model and the dominant cloud now is the leader who locks in enterprise contracts, training data and developer ecosystems before anyone else has time to react. By the time a slower-moving rival has observed the outcome, the moat is already dug.

What that means in practice: every hyperscaler has to spend ahead of the demand it can prove, because waiting for proof guarantees coming second. Microsoft has to spend on the assumption that Google will spend. Google has to spend on the assumption that Amazon will. Each conclusion is rational at the individual level. But when every firm draws the same conclusion at the same time, the combined result is roughly $700 billion of capacity built before the revenue exists to support it. The cooperative outcome, a slower buildout matched to actual demand, would leave every firm better off. None of them can risk being the one that holds back while the others race. That is a prisoner's dilemma. The compression of time is what created it.

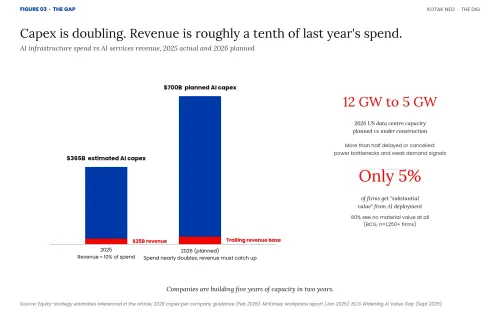

Why 95% Are Losing This Race

The hyperscalers are betting $700 billion that enterprise demand for AI compute will justify a massive infrastructure buildout. Yet AI-related services generated only about $25 billion in revenue in 2025: roughly 10% of what these companies spent on infrastructure. The evidence suggests they are overbuilding. Approximately 12 gigawatts of data centre capacity was planned to come online in the US in 2026. Only 5 gigawatts is under construction. The other 7 gigawatts, more than half, have been delayed or cancelled due to power grid bottlenecks, electrical component shortages, and insufficient demand signals.

Figure 03 · The Gap

But here is a puzzle worth resolving. Demand for AI services at the individual company level is real. TCS reports $2.3 billion in annualised AI revenue. 87% of Indian businesses and 84% of UK organisations are deploying AI in some form. At the company level, AI is genuinely cutting costs. So if AI is working, why is it not paying off for the firms building it?

The answer is in the structure. The value being captured at the company level is incremental, not transformational. You can save 30% on a process with AI. That is real money. But when every competitor does the same thing, no one gains advantage. Clients simply demand lower prices to match. The gains get competed away within quarters. This is exactly what is happening to Indian IT firms.

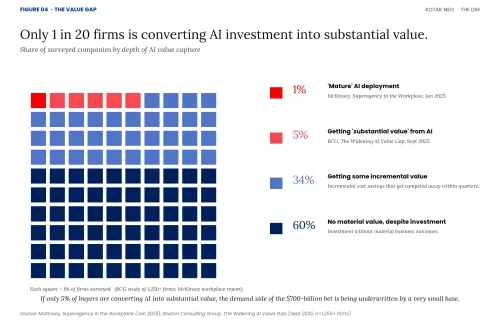

And only a tiny fraction of companies are getting more than incremental value. Only 1% of leaders describe their companies as "mature" in AI deployment, according to McKinsey's January 2025 workplace report. Only 5% are getting "substantial value", according to Boston Consulting Group's 2025 study of more than 1,250 firms. The same study found that 60% of companies are achieving no material AI value at all, despite substantial investment. BCG calls this The Widening AI Value Gap. The spread is real and growing.

Figure 04 · The Value Gap

The infrastructure providers face the worst version of this trap. The parallel to 2001's fibre optic bubble grows harder to ignore. By that year, an estimated 95% of installed fibre optic cable remained "dark", unused and unlit. Overcapacity collapsed pricing by more than 90%. Global telecom stocks lost over $2 trillion in market value. WorldCom, Global Crossing and numerous other telecom giants of the dotcom era filed for bankruptcy. The same dynamics now threaten AI infrastructure. Companies are building five years of capacity in two years, creating a period of overcapacity that could pressure margins and valuations for a generation.

Why Markets Cheered, And Why That Is Starting To Change

If the spending is this large and the returns this thin, the natural question is why markets did not discipline this behaviour earlier. Through 2024 and 2025, the verdict was the opposite: the more you spent, the better your stock performed. Four arguments sustained that enthusiasm.

The first was the AWS precedent. When Amazon began pouring billions into cloud computing infrastructure two decades ago, analysts called it reckless and the stock fell. That infrastructure became Amazon Web Services, which today generates more than $100 billion (roughly ₹9.5 lakh crore) in annual revenue and earns most of Amazon's operating profit. Investors are betting that one of these AI bets (Google's custom AI chips, Microsoft's Azure-OpenAI integration, Amazon's Trainium processors) becomes the next AWS. They are pricing optionality, not earnings.

The second was the moat thesis. Analysts described Alphabet's data centre and chip build-out as creating a competitive advantage so wide that no rival could catch up. A moat purchased today with debt looks expensive on a quarterly view, but invaluable over the next decade.

The third was accounting timing. Capital expenditure does not hit a company's profit and loss statement immediately. It is depreciated over the useful life of the asset, typically five to ten years. A $200 billion spending year does not produce a $200 billion earnings hit. The damage is amortised gradually, which makes the short-term reported numbers far more palatable than the cash flow statements suggest.

The fourth was simple narrative dominance. AI is the only macro growth story large enough to absorb the trillions of dollars seeking returns in global equity markets. If you must be invested in equities, and you must own the largest companies by market capitalisation, you own the AI capex story by default. Passive index funds make this almost compulsory.

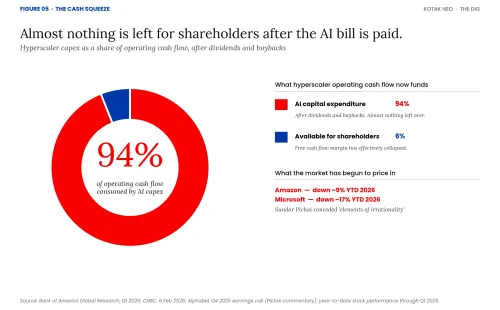

That equation has started to fray in 2026. After the latest round of spending announcements in February, Amazon's stock fell 6% on the day and is down roughly 9% year-to-date. Microsoft is down 17%. Even Sundar Pichai, Alphabet's chief executive, publicly conceded "elements of irrationality" in the current pace. Bank of America now calculates that hyperscaler capital expenditure consumes 94% of operating cash flow after dividends and buybacks. There is almost nothing left for shareholders.

Figure 05 · The Cash Squeeze

In short, markets did not reward this behaviour because they had carefully analysed long-term returns. They rewarded it because the alternatives looked worse, the accounting allowed it, and no competing narrative could absorb the capital. That is now changing, not because investors have suddenly become long-term thinkers, but because the short-term cash flow damage has become too large to amortise away.

The China Mirror

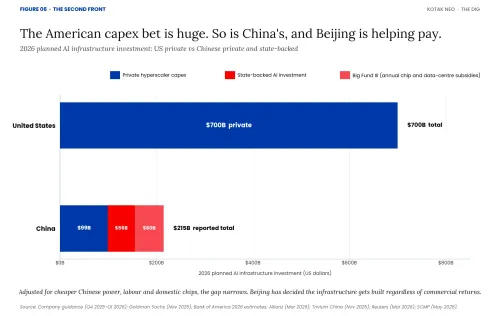

The race has a second front that most Indian investors underestimate. China is running its own version of the same overinvestment cycle, partly state-financed.

Chinese hyperscalers will spend roughly $99 billion on AI infrastructure in 2026, about one-seventh of the US figure in absolute terms, but considerably closer once you adjust for cheaper power, labour and lower-cost domestic chips. Alibaba is leading the private-sector charge with $52 billion committed over three years, which has already pushed its free cash flow negative. ByteDance has raised its 2026 capex to over $30 billion. Tencent spent about $11.5 billion in 2025, constrained by US export controls on advanced AI chips, and has pledged a larger 2026 number.

State support is significant. The central government's Big Fund III, China's state-backed national semiconductor and AI investment vehicle, is providing $50 to $70 billion in annual subsidies for AI chips and data centres. Bank of America estimates state-backed AI investment will reach $56 billion in 2026, with another $70 billion under consideration. Local governments are issuing "computing power vouchers": direct subsidies letting startups access AI compute below market rates. Several are subsidising data centre electricity bills by up to 50%, leveraging China's one structural advantage over the US: abundant cheap power.

Figure 06 · The Second Front

Adoption tells the most interesting story. China's daily AI token calls (the basic units AI models process) exceeded 140 trillion by the end of March 2026, a thousandfold increase from the start of 2024. ByteDance's Doubao chatbot alone has 345 million monthly active users. The National Data Administration has formally elevated tokens to the status of a national economic indicator.

But the same demand-side problem that haunts the American bull case haunts the Chinese one. Consumers and enterprises in China rarely pay for software. The top Chinese AI chatbots are all free. Hundreds of data centres built in 2023 and 2024 are sitting unused because GPU rental turned out to be unprofitable, and many were built primarily to capture subsidies rather than serve real commercial demand. The token volume is real. The willingness to pay for those tokens at sustainable margins is not yet established.

What this means for the prisoner's dilemma argument is that the race has no off-ramp on either side. American hyperscalers cannot moderate because they are racing against a state-backed Chinese competitor that simply cannot be allowed to win. Chinese hyperscalers cannot moderate because they are racing against American giants with deeper free-market capital and superior chips. Beijing has effectively decided that even if commercial returns disappoint, the strategic infrastructure must be built. The result is overcapacity being constructed simultaneously on both sides of the Pacific, by participants who each have different but equally compelling reasons to keep spending.

Why They Cannot Stop, Even If They Wanted To

Even on the American side alone, coordination is impossible. Microsoft's Satya Nadella cannot call Sundar Pichai at Alphabet to propose a spending ceasefire: it would violate antitrust law. And given the Chinese pressure just described, even legal coordination would not slow the spending. Silicon Valley executives describe falling behind in AI as an existential threat, more terrifying to them than tariffs or trade wars. The result is a spending spiral with no off-ramp. But the financial carnage is not even the worst part.

The Demand Shock

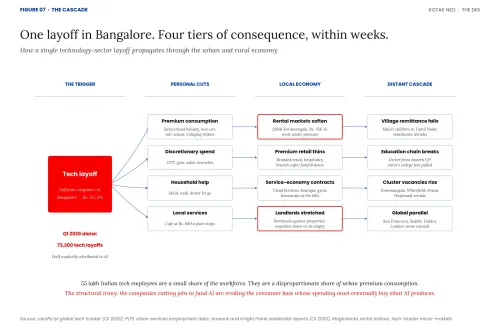

Here is what makes the current moment uniquely destructive. Companies are simultaneously spending billions to build AI infrastructure while systematically destroying the consumer base whose spending power must eventually buy whatever AI produces. In the first quarter of 2026 alone, technology companies laid off 73,200 workers globally. Oracle's mass layoffs. Meta's restructuring. Snap's efficiency drive. Amazon's "streamlining". Half of these layoffs are explicitly attributed to AI and automation.

The economic consequences begin playing out within weeks, not years.

When a software engineer in Bangalore earning ₹35 lakh a year loses their job, the consequence is not abstract. The Switzerland holiday gets cancelled. The international school becomes a CBSE alternative. The new car is postponed. The gym membership, the OTT subscriptions, the ₹35,000 Coldplay or Shakira tickets: all of it is gone. The customer base sustaining urban India's premium consumption is suddenly worried about its next pay cheque.

But the most immediate cuts are closest to home. The live-in maid is let go. The cook stops coming. The driver is told the family will manage with cabs. The weekly brunch at the Koramangala or BKC café quietly stops. The salon visits become quarterly. All of it gets reviewed in a household budget meeting nobody was expecting.

And this is where the cascade really begins. The maid supporting three children in a Tamil Nadu village now sends home a fraction of what she did. The driver from eastern Uttar Pradesh stops the remittance paying his sister's college fees. The café in Koramangala built around the ₹600 brunch sees its footfall thin within weeks. Multiply this across lakhs of households and an economic shock travels from urban India's affluent neighbourhoods back to rural and small-town India through the remittance economy.

Figure 07 · The Cascade

The rental markets are where this becomes visible fastest. Two-bedroom apartments in Koramangala commanded ₹70,000 to ₹1 lakh a month through 2023 and 2024, sustained by tech professionals sharing rent. Powai saw similar increases. When tech hiring slows, rental demand softens within weeks. Landlords who borrowed against these properties find themselves negotiating downward or sitting on empty flats.

India's roughly 55 lakh technology employees are a small share of the working population but a disproportionately large share of urban premium consumption: premium housing, car sales, international travel, branded retail, private schools, the entire experience economy. The cloud kitchens, the boutique gyms, the home-stays in the hills: all of it was built on the assumption this consumer base would keep growing. If technology layoffs continue at the current pace, that assumption breaks.

This is not a uniquely Indian story. The same cascade plays out in every major technology hub. In San Francisco, laid-off engineers are cancelling Lake Tahoe weekends (the Bay Area's favoured ski getaway) and the daily lunch habit. In Seattle, Amazon and Microsoft cuts are hitting downtown restaurants. In Dublin, premium rentals are under pressure. London, Singapore, Toronto, Berlin: each has its own version of Koramangala, its own maid, driver and café sustained by well-paid technology workers.

The structural irony runs through this entire story. The companies cutting jobs to fund AI infrastructure are simultaneously eroding the consumer base whose spending power must eventually buy whatever AI produces. The prisoner's dilemma plays out across the entire urban economy, every time a layoff email goes out, and the shock waves travel further, faster, than most investors currently understand.

Destroying The Talent Pipeline: The Slower-Burn Crisis

The demand cascade plays out within weeks. The talent pipeline crisis is the slower-burning second consequence of the same layoffs, one that will not show up in earnings reports for another three to five years, and will be no less catastrophic when it does.

Look closer at who is being cut. Junior engineers. Software testers. Documentation specialists. The people who do the unglamorous work that nobody notices until it is gone.

Junior engineers do not just write simple code. They do testing. They write documentation. They debug edge cases. They sit in architecture reviews. This is where they learn to recognise patterns, build intuition about system design, and develop the tacit knowledge that eventually makes them senior engineers.

When you eliminate this entire tier of the workforce, you are not just cutting costs. You are breaking the talent pipeline. In five years, when companies need senior engineers who deeply understand their systems and can design complex AI integrations, where will they find them? You cannot hire a senior engineer with ten years of experience if you spent the last five years not hiring juniors.

The logic is impeccable in the short term. AI can handle basic testing. Documentation can be automated. Why pay a junior engineer ₹8 lakh a year when Claude or ChatGPT can do 70% of the work for a fraction of the cost? The problem emerges in the long term. That junior engineer doing testing is not just executing tasks. They are learning the system, building mental models, developing judgement: things that require years of immersion and cannot be short-circuited.

The Cruel Economics Of Getting Disrupted While Funding Your Own Disruption

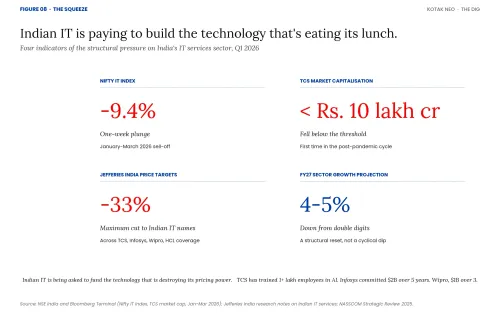

Nowhere is the squeeze more visible than in India's IT sector. TCS has trained over one lakh employees in AI skills. Infosys is investing $2 billion in AI over five years. Wipro committed $1 billion over three years. These are not small bets. They are existential investments for companies that built empires on a simple value proposition: Indian engineers cost a fraction of their American counterparts.

Now that value proposition is being automated away. And their clients know it. A procurement manager at a Fortune 500 firm recently described using AI as a negotiating weapon. He suggested his company might replace their Indian IT vendor entirely with AI-assisted development. The vendor renewed their contract at a 30% discount.

This is the dual bind. Indian IT companies are being forced to spend billions on AI infrastructure while simultaneously watching clients use the threat of that same AI to extract deep price cuts. They are funding the technology that is destroying their pricing power, because not funding it means losing all their business.

The market has noticed. The Nifty IT index plunged 9.4% in one week. TCS's market capitalisation fell below ₹10 lakh crore. Jefferies cut price targets on Indian IT companies by up to 33%. Sector growth projections have collapsed from double digits to 4–5% for FY27. These companies are caught in the same game as the global hyperscalers, except they are playing from a position of structural disadvantage. Their entire business model was built on labour cost arbitrage. And AI destroys arbitrage.

Figure 08 · The Squeeze

The Bull Case, And Where It Breaks

Despite the spending, the layoffs and the shockwaves now spreading from Bangalore to San Francisco, plenty of investors and analysts still believe the AI infrastructure bet will eventually pay off: that this is not a prisoner's dilemma, that the spending is rational, and that the framing in this piece is wrong. Their argument rests on four pillars.

The first is the Amazon Web Services precedent. Amazon spent fifteen years absorbing crushing capex losses while analysts called it reckless. That infrastructure became the most profitable platform business in modern technology history, and bulls argue AI follows the same shape. The second is enterprise lock-in. Every Fortune 500 company building on Azure OpenAI, AWS Bedrock or Google Vertex is signing decade-long platform commitments with enormous switching costs. The third is the demand curve. Token volumes are growing thousandfold globally and NVIDIA's data centre revenue is hyperbolic. The fourth is the strategic backstop. Washington implicitly co-signs this race through export controls, defence partnerships and procurement. The infrastructure will be built regardless of commercial outcomes.

Each pillar has a serious problem. The AWS precedent breaks down on competitive structure. AWS earned its margins in a stable three-player cloud oligopoly. AI infrastructure faces five well-funded American hyperscalers, four Chinese giants, plus OpenAI and Anthropic. Twelve serious competitors with similar capabilities does not produce AWS economics. It produces fibre optic economics.

The enterprise lock-in argument assumed proprietary frontier models would dominate. Open-source models (Meta's Llama, Mistral, DeepSeek, Alibaba's Qwen) have closed the gap, which is why the procurement manager extracting a 30% discount earlier in this story is empirical proof that enterprise buyers are not locked in. The demand curve has a measurement problem. Much of the token volume is unmonetised free-tier consumption, particularly in China. Revenue per token is falling under competitive pressure. And OpenAI reportedly accounts for a large share of Microsoft's AI cloud revenue, meaning much of the apparent demand is recursive accounting between affiliated entities.

The strategic backstop is real but conditional. Washington supports the race for strategic capability, not for shareholder returns. The government will ensure the infrastructure exists. It will not ensure that the equity holders are compensated.

But here is what the bull case does not address. Every pillar above assumes a consumer base capable of buying whatever AI eventually produces. The same capex strategy that builds the infrastructure is eroding the consumer demand base that underwrites the broader economy. There is no path to monetising AI at scale without a healthy global consumer economy, and the companies building that infrastructure are systematically dismantling that economy. The bulls have a story for every concern except this one. It is the structural argument at the heart of the prisoner's dilemma.

What This Means For Indian Markets And Retail Investors

For the Indian retail investor, this story matters in four direct ways.

First, the Nifty 50 and Nifty IT now move in tighter correlation with the Nasdaq than at any point in the last decade. When American technology stocks sell off, Indian IT follows within hours. The diversification benefit Indian investors once got from holding domestic equities against global volatility has weakened considerably.

Second, most Indian mutual funds with international exposure are heavily concentrated in the same handful of names: Apple, Microsoft, Nvidia, Alphabet, Amazon and Meta together account for the majority of US equity exposure in flagship international and feeder funds. If you hold an international fund of funds, a flexicap with global allocation, or a Nasdaq 100 ETF, you are directly exposed to the AI capex bet, whether you intended to be or not.

Third, the structural threat to Indian IT services is now a structural threat to a meaningful slice of every diversified Indian portfolio. TCS, Infosys, Wipro and HCL Tech together carry significant weight in benchmark indices and are core holdings in most large-cap, multi-cap and ELSS mutual funds. The traditional steady cash flows and predictable dividends that made them investor favourites are under genuine structural pressure, not cyclical weakness that reverses next quarter.

Fourth, and most underpriced, the consumer discretionary thesis that has held up much of the Indian equity earnings story is built on the same urban premium consumer whose income is now under threat. The same logic applies to the global consumer brands sitting inside your international funds. If you hold premium auto, premium real estate, hospitality, travel, branded retail names (Indian or global), you are exposed to a demand shock that is now structural rather than cyclical, and worldwide rather than national.

The risk for investors, Indian and global, is asymmetric. If the AI revolution fully materialises and these companies successfully monetise their massive infrastructure investments, they will recover their spending and then some. But if demand plateaus, if efficiency gains outpace revenue growth, if the infrastructure becomes stranded, the downside is severe, and it shows up across multiple sectors and multiple geographies simultaneously.

The Race Everyone Must Run

So here we are.

Unlike the contestants in Squid Game, these companies cannot simply walk away. The outside world is not an escape. It is oblivion. Nokia chose not to race on smartphones. BlackBerry chose to stick with keyboards. Yahoo chose not to invest in search. The companies that left the race are case studies in business school textbooks.

Somewhere in this machinery, a junior engineer at TCS finishes a testing assignment, writes documentation, debugs an edge case, and unknowingly builds the intuition that might make them a senior architect in 2031. If they are not laid off first. If their company still exists. If there are still jobs for senior architects who understand systems built by humans rather than generated by AI. And if there is still a customer base wealthy enough to buy whatever it is they eventually build.

The prize for winning this race is the chance to run the next one. The penalty for losing: we are about to find out.

Sources

- McKinsey, Superagency in the Workplace: Empowering People to Unlock AI's Full Potential (January 2025)

- Boston Consulting Group, The Widening AI Value Gap: Build for the Future 2025 (September 2025)

- Nielsen India Technology Adoption Report 2025

- Deloitte UK Digital Transformation Study 2025

- Goldman Sachs Global Investment Research, equity strategy notes on AI capex cycle

- Bank of America Global Research, hyperscaler capex and operating cash flow analysis

- Morgan Stanley research on hyperscaler debt issuance projections

- Allianz Research, "AI Capex Cycle" briefing, March 2026

- Goldman Sachs, "China's AI providers expected to invest $70 billion in data centers amid overseas expansion," November 2025

- South China Morning Post, ByteDance AI capex coverage, May 2026

- Reuters, "Tencent pledges higher AI investment in 2026 after chip curbs hit capex plans," March 2026

- MIT Technology Review, "China built hundreds of AI data centers to catch the AI boom. Now many stand unused," March 2025

- Trivium China, "How China's AI infrastructure push supports its drive for tech self-sufficiency," November 2025

- Bank of America estimates on state-backed Chinese AI investment, 2026

- CNBC, "Tech AI spending approaches $700 billion in 2026, cash taking big hit," 6 February 2026

- MarketWise, "Hyperscaler AI Investment to Surge 71% in 2026"

- Investing.com analysis of Q4 2025 and Q1 2026 hyperscaler earnings, including Sundar Pichai's "elements of irrationality" commentary

- CreditSights, hyperscaler capex 2026 estimates and Oracle credit analysis

- NASSCOM Strategic Review 2025, Indian IT sector workforce data

- Periodic Labour Force Survey (PLFS), Ministry of Statistics and Programme Implementation, urban services employment data

- Anarock Property Consultants, residential rental and capital values reports for Bangalore and Mumbai, Q1 2026

- Knight Frank India residential market reports, Bangalore and Mumbai premium segments, Q1 2026

- Magicbricks and Housing.com rental index data, tech-cluster micro-markets (Koramangala, Whitefield, Powai, Hinjewadi)

- Layoffs.fyi global tech layoff tracker, 2024–2026

- USD-INR exchange rate (₹95 per USD): Reserve Bank of India reference rate, May 2026

- NSE India and Bloomberg Terminal data: Nifty IT index movement and TCS market capitalisation, January–March 2026

- Company financial reports and investor presentations: Amazon, Alphabet, Microsoft, Meta, Oracle quarterly earnings calls, 2025–2026

- North American Data Centers Association and US Energy Information Administration, infrastructure planning data Q1 2026

- Jefferies India research notes on Indian IT services price target revisions

This article is for informational purposes only and does not constitute financial advice. It is not produced by the desk of the Kotak Securities Research Team, nor is it a report published by the Kotak Securities Research Team. The information presented is compiled from several secondary sources available on the internet and may change over time. Investors should conduct their own research and consult with financial professionals before making any investment decisions. Read the full disclaimer here.

Investments in securities market are subject to market risks, read all the related documents carefully before investing. Brokerage will not exceed SEBI prescribed limit. The securities are quoted as an example and not as a recommendation. SEBI Registration No-INZ000200137 Member Id NSE-08081; BSE-673; MSE-1024, MCX-56285, NCDEX-1262.

0 people liked this article.